The business world can’t stop talking about digital transformation and 2020 is expected to see the rapid scaling of digital initiatives across all sectors. When it comes to DevOps (often intertwined with the concept of digital transformation), however, business leaders are still scratching their heads. What DevOps really means to their business, their technology investments, and their teams, seems difficult to grasp. Yet both are two sides of the same coin. In this article, we’ll see how digital transformation and DevOps relate and what it takes to implement a DevOps approach.

Digital transformation is being driven by customer, partner, and supplier interactions that are increasingly moving into the digital realm. Customers, spoiled by fast and reliable interactions provided by cloud-born innovators like Netflix, Uber, or Monzo (a cloud-born UK bank), now demand the same level of convenience from more traditional enterprises. Today, software has become a key strategic business differentiator, and its fast, reliable, and convenient delivery is at the core of digital transformation.

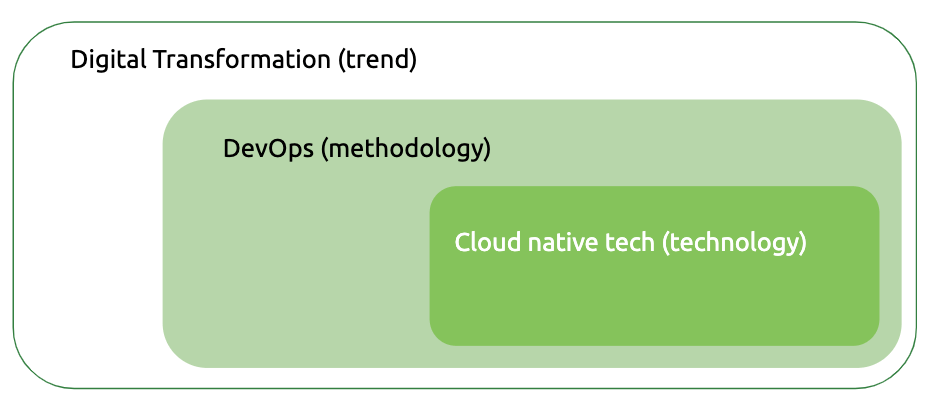

In simple terms, DevOps is the methodology that enables this fast, frequent, and uninterrupted service delivery, and cloud native technologies make such a methodology possible.

Implementing a DevOps approach requires a complete shift in terms of technology, processes, and culture — it certainly isn’t easy. Yet, as the yearly “Accelerate State of DevOps 2019” exemplifies, the benefits are staggering: “DevOps elite performers” deploy 208 times more frequently and 106 times faster than low performers, or those using a more traditional approach. They recover from incidents 2,604 faster and have a seven times lower change failure rate.

While these stats seem too good to be true, they are validated year-over-year. So if you ever thought the cost-benefit ratio of DevOps isn’t worth the effort, think again.

How DevOps Came to Be

Inspired by the Lean Movement in the manufacturing of the 1980s, DevOps is seen as a logical continuation of Agile (2000s). As great as DevOps concepts are, however, they wouldn’t have been feasible without the technological innovation brought to us by the cloud. The cloud, for the first time, enabled developers to create environments on-demand. On-demand cloud services also provided developers with the needed tools at the click of a button. The result: unprecedented developer productivity, a hooked world, and the beginning of a new IT era.

This newly discovered productivity gave rise to a whole new breed of technologies: the so-called cloud native stack. Similar to cloud managed services, cloud native technologies, among other things, provide services like storage, messaging, or service discovery, all available with the click of a button. Unlike cloud managed services, they are infrastructure-independent, configurable, and, in some cases, more secure.

The term cloud native can be a little misleading. While developed for the cloud, they are not cloud-bound. In fact, we are increasingly seeing enterprises deploying these technologies on-premise.

IT Before DevOps

To appreciate the benefits of DevOps, we have to understand how IT traditionally operates. In complex organizations with tightly-coupled, monolithic apps (basically any traditional enterprise with legacy apps), work is generally fragmented between multiple groups. This leads to many handoffs and long lead times. Each time a piece is ready, it’s placed in a queue for the next team. Furthermore, individuals only work on one small project piece at the time, leading to a lack of ownership. Their goal is to get the work to the next group, not to deliver the right functionality to the customer — a clear misalignment of priorities, yet deeply ingrained in the system. Additionally, in traditional IT, there were only a few integration test environments. These are environments where developers can test if their code works with all dependencies such as databases or external services. Ensuring smooth integration early on is key to reducing risk.

By the time code finally got into production, it went through so many developers, waited in so many queues that, if the code didn’t work, it was difficult to trace the origin of the problem. Months had passed and whoever worked on it could hardly remember what they had done. Identifying and fixing the problem was difficult and resource-intensive.

If this sounds like a nightmare, it clearly felt like one. This is especially true for the Ops team who are responsible for smooth deployments. A service disruption can have huge implications for any business. Just think of all the hundreds or thousands of customers complaining on social media — a potential PR disaster — or, depending on the case and business, maybe even making the evening news. Would you like to be responsible for pushing the deploy button?

DevOps, a 10,000-foot View

The main goal of DevOps is to create a workflow from left to right with as little handoffs as possible and fast feedback loops. What does that mean? Work, in our case code, should move forward (left to right) and never back to be fixed again. Problems should be identified and fixed when and where they were introduced. For that to happen, developers need fast feedback loops. Feedback is provided through quick automated tests that will validate if the code works as it should before moving it to the next stage.

To decrease handoffs and increase a sense of ownership, small groups will work on smaller functionalities (vs. an entire feature) and own the entire process: create the request, commit, QA, and deploy — from Dev to Ops or DevOps. The focus is on pushing small pieces of code out quickly. The smaller the change going into production, the easier it is to diagnose, fix, and remediate.

The results are minimized handoffs, reduced risk when deploying into production, better code quality as teams are also responsible for how code performs in production and increased employee satisfaction due to more autonomy and ownership.

To achieve this, you need the right technology, but you must also reorganize your entire IT department. Small groups that own an entire process means developers will have to broaden their skills and radically change the way they work. Experience has shown that the cultural change brought about by DevOps is a lot more difficult than adopting new technologies.

DevOps itself is about culture change: small groups working on small pieces from start to finish while focusing on global goals, which are always prioritized over individual/group goals. DevOps tools are used to reinforce this culture and accelerate desired behavior.

Key Technology Development for DevOps

DevOps seems to be mainly about organizational structure and everything mentioned above sounds like common sense. So why did we fragment work and created huge monoliths that are difficult to diagnose and manage in the first place? While smaller code batches and teams who are accountable from start to finish per se isn’t a technology decision, technology has enabled these team structures. Here are some of the key developments that made that transformation possible:

Containers, which are used to “package” and “ship” code, are a lot more lightweight than virtual machines (VMs), which were previously the only way to do so (we cover containers and VMs in our Kubernetes primer). Consuming a lot of resources, shipping small batches of code in VMs didn’t make economic sense — it was just too expensive. Containers, on the other hand, consume very little resources, enabling developers to work on and ship even just a few lines of code.

On-demand environments allow developers to spin up a new development or QA environment when needed. Traditionally, developers had to request an environment from the operations team — a request that would first land in a (long) queue. Ops then had to manually provision it. Waiting weeks, sometimes even months to get an environment was completely normal. Then the cloud introduced on-demand environments, a revolution at the time. Through a user interface, command-line interface (CLI), or application programming interface (API), developers can specify what they need (e.g. how many VMs, RAM, CPU, storage) and the environment is provisioned in a matter of clicks.

Automated testing enables developers to run QA tests themselves. As soon as the code is done, they run the tests, sometimes even in parallel, and get instant feedback. They can then fix it and run the test again. Getting timely feedback while memory is still fresh, is critical. Traditionally, tests were performed by the QA team, yet not before the code waited in line for its turn. Tests were manual and error-prone, and feedback came weeks or months after the developer worked on the code. Under these circumstances, identifying potential error sources was clearly a lot more difficult.

Automated deployments allow developers to deploy code themselves. Pre-deployments tests ensure code is good to go before automatically pushing it into production. Without all these automated tests, there were a lot more unknowns and each deployment represented a huge risk. That’s why only the operations team was allowed to deploy, and they would do so in a very planned manner, each time prepared for potential disruption.

More loosely-coupled architecture based on containers. Another benefit containers bring is that all code dependencies are bundled inside the container, making it completely environment independent. This leads to a modular architecture where each piece, isolated in a container, can be removed, updated, or exchanged at any time without affecting other pieces. This allows developers to make changes safely and with more autonomy increasing developer productivity. In a system that is tightly coupled, on the other hand, changes can have unexpected consequences introducing a level of risk that encourages less, not more deployments.

When these technologies come together — namely continuous delivery, microservices, service meshes, call tracing and cloud native monitoring and log collection, etc. — advanced software delivery scenarios become possible.

Canary deployment, for example, is a well-known delivery technique that rolls out new code into production iteratively. First, for a small segment, let’s say 1% of the user base, and then, as all goes smoothly, gradually to the rest of the user base. The old code is still in place as the rollout is executed through a gradual rerouting. The behavior of the new code is constantly monitored and, if it doesn’t increase the error rate, the rollout continues. Otherwise, it’s rolled back for review and bug fixing. That’s a far cry from the scenario described above.

Keep Pace with Change — Risks and Rewards

Technology is eliminating numerous bottlenecks making a DevOps approach feasible. Previously, IT departments did the best they could with what they had. Today, however, a traditional IT structure is not sustainable anymore. The world is moving increasingly faster and if IT can’t keep up, chances are your company will be left behind. That’s why we are seeing everyone rapidly jumping on the digital transformation bandwagon. Yet rushing to adopt these new technologies may lead to suboptimal architectures that will have to be rearchitected yet again in the near future. It’s important to follow best practices and avoid shortcuts, as tempting as it may seem.

In part two of this article, we dig a little deeper. We discuss how developers can get fast feedback on code quality, how DevOps-oriented teams and architectures look like, as well as the role of telemetry and security. In short, you’ll get a better sense of what it takes to implement an enterprise-wide DevOps approach.